What is Team Science? Research is increasingly accessing expertise of multidisciplinary collaborative groups. Team science is the collaborative effort to address a scientific challenge that leverages the strengths and expertise of professionals trained in different fields. Traditional single-investigator driven approaches used to be the primary approach - and may still be appropriate for many scientific endeavors. However, interdisciplinary teams of investigators are essential for addressing multi-factorial problems. These include complex health, behavioral health, and social problems with multiple causes. Step One about our mission.

Step Two our research activities. Step Three Together, we will discuss the potential for collaboration and identify steps to begin. If it's not a fit, we're happy to offer alternatives or referrals. Other Services Are you looking to hire a science team or individual as a consultant? About our low-cost research and program evaluation services. Open Positions: Board Member, Board Treasurer IBHRI is seeking individuals interested in our mission to join our volunteer Board of Directors.

Those with experience in social science or behavioral health research are encouraged to apply. We are also seeking a Board Treasurer to provide close oversight of cash, checks, deposits, and handling of money with high standards and integrity.

The Treasurer is responsible for managing the filings (990s, 1099, 1099-MISC), obtaining permits and licenses, and attending Board meetings to present the Treasurer's report. Qualifications. Experience with budget planning and evaluation. Board experience preferred. Organized and detail-oriented. Familiar with accounting software and practices for 501(c)(3) organizations.

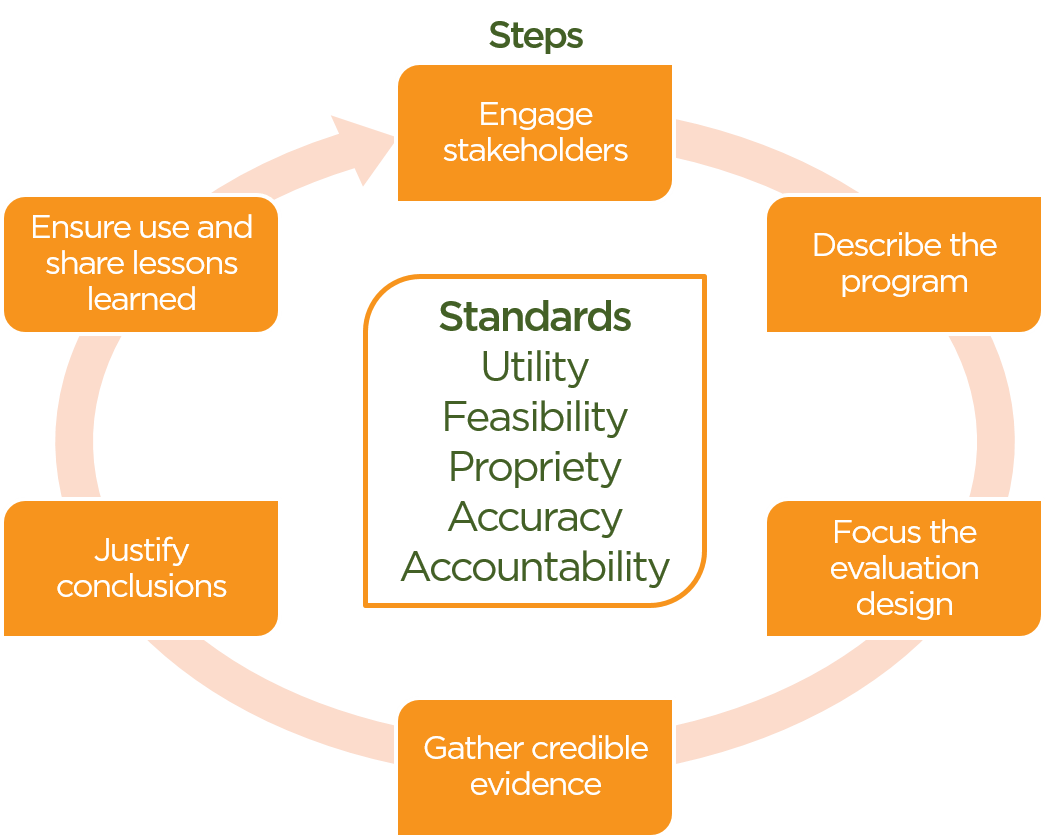

Standards

Knowledgeable and approachable.

What is Team Science? Research is increasingly accessing expertise of multidisciplinary collaborative groups. Team science is the collaborative effort to address a scientific challenge that leverages the strengths and expertise of professionals trained in different fields. Traditional single-investigator driven approaches used to be the primary approach - and may still be appropriate for many scientific endeavors. However, interdisciplinary teams of investigators are essential for addressing multi-factorial problems. These include complex health, behavioral health, and social problems with multiple causes. Step One about our mission.

Step Two our research activities. Step Three Together, we will discuss the potential for collaboration and identify steps to begin. If it's not a fit, we're happy to offer alternatives or referrals. Other Services Are you looking to hire a science team or individual as a consultant? About our low-cost research and program evaluation services. Open Positions: Board Member, Board Treasurer IBHRI is seeking individuals interested in our mission to join our volunteer Board of Directors. Those with experience in social science or behavioral health research are encouraged to apply.

We are also seeking a Board Treasurer to provide close oversight of cash, checks, deposits, and handling of money with high standards and integrity. The Treasurer is responsible for managing the filings (990s, 1099, 1099-MISC), obtaining permits and licenses, and attending Board meetings to present the Treasurer's report. Qualifications. Experience with budget planning and evaluation. Board experience preferred. Organized and detail-oriented. Familiar with accounting software and practices for 501(c)(3) organizations.

Knowledgeable and approachable.

This article includes a, but its sources remain unclear because it has insufficient. Please help to this article by more precise citations. (February 2010) A logic model (also known as a logical framework, or program matrix) is a tool used by funders, managers, and evaluators of programs to evaluate the effectiveness of a program. They can also be used during planning and implementation. Logic models are usually a graphical depiction of the logical relationships between the resources, activities, outputs and outcomes of a program. While there are many ways in which logic models can be presented, the underlying purpose of constructing a logic model is to assess the 'if-then' (causal) relationships between the elements of the program.

Contents. Versions In its simplest form, a logic model has four components: Inputs Activities Outputs Outcomes/impacts what resources go into a program what activities the program undertakes what is produced through those activities the changes or benefits that result from the program e.g. Money, staff, equipment e.g. Development of materials, training programs e.g. Number of booklets produced, workshops held, people trained e.g. Increased skills/ knowledge/ confidence, leading in longer-term to promotion, new job, etc.

Following the early development of the logic model in the 1970s by Carol Weiss, Joseph Wholey and others, many refinements and variations have been added to the basic concept. Many versions of logic models set out a series of outcomes/impacts, explaining in more detail the logic of how an intervention contributes to intended or observed results. This will often include distinguishing between short-term, medium-term and long-term results, and between direct and indirect results. Some logic models also include assumptions, which are beliefs the prospective grantees have about the program, the people involved, and the context and the way the prospective grantees think the program will work, and external factors, consisting of the environment in which the program exists, including a variety of external factors that interact with and influence the program action. Innovation Network. Retrieved 28 August 2012. McCawley, Paul.

University of Idaho. Archived from (PDF) on 2010-11-17. Kellogg Foundation (2001). Weiss, C.H. Evaluation Research.

Methods for Assessing Program Effectiveness. Prentice-Hall, Inc., Englewood Cliffs, New Jersey. Wyatt., Knowlton, Lisa (2013). Phillips, Cynthia C. Los Angeles: SAGE. 2008-10-18 at the.

Archived from on 2007-08-24. Retrieved 2012-09-22. Wikimedia Commons has media related to. General references. This article includes a, but its sources remain unclear because it has insufficient.

Please help to this article by more precise citations. (August 2009). Millar, A., R.S.

Simeone, and J.T. Logic models: a systems tool for performance management. Evaluation and Program Planning. Hernandez, M. Rossi, P., Lipsey, M.W., and Freeman, H.E. A systematic approach (7th ed.). Thousand Oaks, CA: Sage.

McCawley, P.F. The Logic Model for Program planning and Evaluation. University of Idaho Extension. Retrieved at Other resources. Alter, C.

Logic modeling: A tool for teaching practice evaluation. Journal of Social Work Education, 33(1), 103-117. Conrad, Kendon J., & Randolph, Frances L. Creating and using logic models: Four perspectives., 17(1-2), 17-32. Hernandez, Mario (2000). Using logic models and program theory to build outcome accountability.

Education and Treatment of Children, 23(1), 24-41. Innovation Network's Point K Logic Model Builder (2006).

A set of that includes a Logic Model Builder (requires registration). Julian, David A.

The utilization of the logic model as a system level planning and evaluation device. Evaluation and Program Planning, 20(3), 251-257. McLaughlin, J. A., & Jordan, G. Logic models: A tool for telling your program's performance story. Evaluation and Program Planning, 22(1), 65-72. Stinchcomb, Jeanne B.

Using logic modeling to focus evaluation efforts: Translating operational theories into practical measures. Journal of Offender Rehabilitation, 33(2), 47-65.

Unrau, Y.A. Using client exit interviews to illuminate outcomes in program logic models: A case example.

Evaluation and Program Planning, 24(4), 353-361. Usable Knowledge (2006). A 15-minute on logic models.

Learn how program evaluation makes it easier for everyone involved in community health and development work to evaluate their efforts. This section is adapted from the article 'Recommended Framework for Program Evaluation in Public Health Practice,' by Bobby Milstein, Scott Wetterhall, and the CDC Evaluation Working Group. Why evaluate community health and development programs?. How do you evaluate a specific program?. A framework for program evaluation.

What are some standards for 'good' program evaluation?. Applying the framework: Conducting optimal evaluations Around the world, there exist many programs and interventions developed to improve conditions in local communities.

Communities come together to reduce the level of violence that exists, to work for safe, affordable housing for everyone, or to help more students do well in school, to give just a few examples. But how do we know whether these programs are working? If they are not effective, and even if they are, how can we improve them to make them better for local communities? And finally, how can an organization make intelligent choices about which promising programs are likely to work best in their community? Over the past years, there has been a growing trend towards the better use of evaluation to understand and improve practice.The systematic use of evaluation has solved many problems and helped countless community-based organizations do what they do better. Despite an increased understanding of the need for - and the use of - evaluation, however, a basic agreed-upon framework for program evaluation has been lacking. In 1997, scientists at the United States Centers for Disease Control and Prevention (CDC) recognized the need to develop such a framework.

As a result of this, the CDC assembled an Evaluation Working Group comprised of experts in the fields of public health and evaluation. Members were asked to develop a framework that summarizes and organizes the basic elements of program evaluation. This Community Tool Box section describes the framework resulting from the Working Group's efforts.

Before we begin, however, we'd like to offer some definitions of terms that we will use throughout this section. By evaluation, we mean the systematic investigation of the merit, worth, or significance of an object or effort. Evaluation practice has changed dramatically during the past three decades - new methods and approaches have been developed and it is now used for increasingly diverse projects and audiences.

Throughout this section, the term program is used to describe the object or effort that is being evaluated. It may apply to any action with the goal of improving outcomes for whole communities, for more specific sectors (e.g., schools, work places), or for sub-groups (e.g., youth, people experiencing violence or HIV/AIDS). This definition is meant to be very broad. Online Resources The helps guide evaluators in their professional practice.

The is designed to help grantees plan and implement evaluations of their NCCCP-funded programs, this toolkit provides general guidance on evaluation principles and techniques, as well as practical templates and tools. Provides a list of resources for evaluation, as well as links to professional associations and journals. Is a workbook provided by the CDC. In addition to information on designing an evaluation plan, this book also provides worksheets as a step-by-step guide., from the CDC, is designed for people interested in learning about program evaluation and how to apply it to their work. Evaluation is a process, one dependent on what you’re currently doing and on the direction in which you’d like go. In addition to providing helpful information, the site also features an interactive Evaluation Plan & Logic Model Builder, so you can create customized tools for your organization to use. Is a handbook designed by the American Academy of Pediatrics covering a variety of topics related to evaluation.

Is a handbook provided by the U.S. Government Accountability Office with copious information regarding program evaluations.

The CDC's is a 'how-to' guide for planning and implementing evaluation activities. The manual, based on CDC’s Framework for Program Evaluation in Public Health, is intended to assist with planning, designing, implementing and using comprehensive evaluations in a practical way. Is a guide to planning an organization’s evaluation, with several chapters dedicated to gathering information and using it to improve the organization. Is a guide to evaluation written by the U.S. Department of Health and Human Services. Offers information on collecting different forms of data and how to measure different community markers. Is a handbook provided by the Administration for Children and Families with detailed answers to nine big questions regarding program evaluation.

Is a website created by the University of Arizona. It provides links to information on several topics including methods, funding, types of evaluation, and reporting impacts. Is a guide to evaluations provided by the National Science Foundation. This guide includes practical information on quantitative and qualitative methodologies in evaluations.

Provides a framework for thinking about evaluation as a relevant and useful program tool. It was originally written for program directors with direct responsibility for the ongoing evaluation of the W.K.

Kellogg Foundation. Print Resources This Community Tool Box section is an edited version of: CDC Evaluation Working Group. Recommended framework for program evaluation in public health practice. Atlanta, GA: Author.

The article cites the following references: Adler. M., & Ziglio, E.

Gazing into the oracle: the delphi method and its application to social policy and community health and development. London: Jessica Kingsley Publishers. Program Evaluation: A Step-by-Step Guide.

Sunnycrest Press, 2013. This practical manual includes helpful tips to develop evaluations, tables illustrating evaluation approaches, evaluation planning and reporting templates, and resources if you want more information. Basch, C., Silepcevich, E., Gold, R., Duncan, D., & Kolbe, L.

Avoiding type III errors in health education program evaluation: a case study. Health Education Quarterly. 12(4):315-31.

Bickman L, & Rog, D. (1998). Handbook of applied social research methods. Thousand Oaks, CA: Sage Publications. Randomized controlled experiments for evaluation and planning. In Handbook of applied social research methods, edited by Bickman L., & Rog. Thousand Oaks, CA: Sage Publications: 161-92. Centers for Disease Control and Prevention DoHAP.

Evaluating CDC HIV prevention programs: guidance and data system. Atlanta, GA: Centers for Disease Control and Prevention, Division of HIV/AIDS Prevention, 1999. Centers for Disease Control and Prevention. Guidelines for evaluating surveillance systems.

Morbidity and Mortality Weekly Report 1988;37(S-5):1-18. Centers for Disease Control and Prevention. Handbook for evaluating HIV education. Atlanta, GA: Centers for Disease Control and Prevention, National Center for Chronic Disease Prevention and Health Promotion, Division of Adolescent and School Health, 1995. Cook, T., & Campbell, D. Chicago, IL: Rand McNally. Cook, T.,& Reichardt, C.

Qualitative and quantitative methods in evaluation research. Beverly Hills, CA: Sage Publications. Cousins, J.,& Whitmore, E. Framing participatory evaluation.

In Understanding and practicing participatory evaluation, vol. 80, edited by E Whitmore. San Francisco, CA: Jossey-Bass: 5-24. Chen, H. (1990). Theory driven evaluations.

Newbury Park, CA: Sage Publications. De Vries, H., Weijts, W., Dijkstra, M., & Kok, G. The utilization of qualitative and quantitative data for health education program planning, implementation, and evaluation: a spiral approach. Health Education Quarterly.1992; 19(1):101-15. Ten organizational practices of community health and development: a historical perspective. American Journal of Preventive Medicine;11(6):6-8.

Performance measurement: problems and solutions. Health Affairs;17 (4):7-25.Harvard Family Research Project. Performance measurement. In The Evaluation Exchange, vol.

Eoyang,G., & Berkas, T. Evaluation in a complex adaptive system.

Edited by (we don´t have the names), (1999): Taylor-Powell E, Steele S, Douglah M. Planning a program evaluation.

Madison, Wisconsin: University of Wisconsin Cooperative Extension. Fawcett, S.B., Paine-Andrews, A., Fancisco, V.T., Schultz, J.A., Richter, K.P, Berkley-Patton, J., Fisher, J., Lewis, R.K., Lopez, C.M., Russos, S., Williams, E.L., Harris, K.J., & Evensen, P. Evaluating community initiatives for health and development. McQueen, et al. (Eds.), Evaluating health promotion approaches.

Copenhagen, Denmark: World Health Organization - Europe. Fawcett, S., Sterling, T., Paine-, A., Harris, K., Francisco, V. et al. Evaluating community efforts to prevent cardiovascular diseases. Atlanta, GA: Centers for Disease Control and Prevention, National Center for Chronic Disease Prevention and Health Promotion. Fetterman, D., Kaftarian, S., & Wandersman, A. Empowerment evaluation: knowledge and tools for self-assessment and accountability.

Thousand Oaks, CA: Sage Publications. Frechtling, J.,& Sharp, L. User-friendly handbook for mixed method evaluations. Washington, DC: National Science Foundation. Goodman, R., Speers, M., McLeroy, K., Fawcett, S., Kegler M., et al. Identifying and defining the dimensions of community capacity to provide a basis for measurement. Health Education and Behavior;25(3):258-78.

Qualitative program evaluation: practice and promise. In Handbook of Qualitative Research, edited by NK Denzin and YS Lincoln. Thousand Oaks, CA: Sage Publications. Haddix, A., Teutsch. Prevention effectiveness: a guide to decision analysis and economic evaluation.

New York, NY: Oxford University Press. In Statistics in Community health and development, edited by Stroup.

S. New York, NY: Oxford University Press, 1998: 193-219 Henry, G. Graphing data. In Handbook of applied social research methods, edited by Bickman. D. Thousand Oaks, CA: Sage Publications: 527-56. Practical sampling.

In Handbook of applied social research methods, edited by Bickman. D. Thousand Oaks, CA: Sage Publications: 101-26. Institute of Medicine. Improving health in the community: a role for performance monitoring. Washington, DC: National Academy Press, 1997.

Joint Committee on Educational Evaluation, James R. Sanders (Chair). The program evaluation standards: how to assess evaluations of educational programs. Thousand Oaks, CA: Sage Publications, 1994. Kaplan, R., & Norton, D. The balanced scorecard: measures that drive performance.

Harvard Business Review 1992;Jan-Feb71-9. Health promotion indicators and actions. New York, NY: Springer Publications. What independent sector learned from an evaluation of its own hard-to -measure programs. In A vision of evaluation, edited by ST Gray. Washington, DC: Independent Sector.

(1999) CDC sets millennium priorities. US Medicine 4-7. Lipsy, M. (1998).

Design sensitivity: statistical power for applied experimental research. In Handbook of applied social research methods, edited by Bickman, L., & Rog, D. Thousand Oaks, CA: Sage Publications. 39-68. Lipsey, M. (1993). Theory as method: small theories of treatments. New Directions for Program Evaluation;(57):5-38. Lipsey, M. (1997). What can you build with thousands of bricks?

Musings on the cumulation of knowledge in program evaluation. New Directions for Evaluation; (76): 7-23. Internal evaluation: building organizations from within. Newbury Park, CA: Sage Publications.

Miles, M., & Huberman, A. (1994). Qualitative data analysis: a sourcebook of methods.

Thousand Oaks, CA: Sage Publications, Inc. National Quality Program. National Quality Program, vol. National Institute of Standards and Technology. National Quality Program.

Baldridge index outperforms S&P 500 for fifth year, vol. National Quality Program, 1999. National Quality Program. Health care criteria for performance excellence, vol.

National Quality Program, 1998. Using statistics appropriately.

In Handbook of Practical Program Evaluation, edited by Wholey,J., Hatry, H., & Newcomer. K. San Francisco, CA: Jossey-Bass, 1994: 389-416. Patton, M. (1990). Qualitative evaluation and research methods. Newbury Park, CA: Sage Publications.

Patton, M (1997). Toward distinguishing empowerment evaluation and placing it in a larger context. Evaluation Practice;18(2):147-63. Patton, M. (1997). Utilization-focused evaluation. Thousand Oaks, CA: Sage Publications. Effective use and misuse of performance measurement.

American Journal of Evaluation 1998;19(3):367-79. Perrin, E, Koshel J.

Assessment of performance measures for community health and development, substance abuse, and mental health. Washington, DC: National Academy Press. Phillips, J. (1997).

Handbook of training evaluation and measurement methods. Houston, TX: Gulf Publishing Company. Poreteous, N., Sheldrick B., & Stewart P. (1997). Program evaluation tool kit: a blueprint for community health and development management. Ottawa, Canada: Community health and development Research, Education, and Development Program, Ottawa-Carleton Health Department.

Posavac, E., & Carey R. (1980). Program evaluation: methods and case studies. Prentice-Hall, Englewood Cliffs, NJ. Preskill, H. & Torres R. Evaluative inquiry for learning in organizations.

Thousand Oaks, CA: Sage Publications. Public Health Functions Project. (1996). The public health workforce: an agenda for the 21st century. Washington, DC: U.S. Department of Health and Human Services, Community health and development Service.

Public Health Training Network. Practical evaluation of public health programs. CDC, Atlanta, GA. Reichardt, C., & Mark M. In Handbook of applied social research methods, edited by L Bickman and DJ Rog. Thousand Oaks, CA: Sage Publications, 193-228.

Rossi, P., & Freeman H. Evaluation: a systematic approach.

Newbury Park, CA: Sage Publications. Rush, B., & Ogbourne A.

Program logic models: expanding their role and structure for program planning and evaluation. Canadian Journal of Program Evaluation;695 -106. Sanders, J. (1993). Uses of evaluation as a means toward organizational effectiveness. In A vision of evaluation, edited by ST Gray.

Washington, DC: Independent Sector. Common purpose: strengthening families and neighborhoods to rebuild America.

New York, NY: Anchor Books, Doubleday. Scriven, M. (1998). A minimalist theory of evaluation: the least theory that practice requires.

American Journal of Evaluation. Shadish, W., Cook, T., Leviton, L. (1991). Foundations of program evaluation. Newbury Park, CA: Sage Publications. Evaluation theory is who we are. American Journal of Evaluation:19(1):1-19.

Shulha, L., & Cousins, J. (1997). Evaluation use: theory, research, and practice since 1986. Evaluation Practice.18(3):195-208 Sieber, J. Planning ethically responsible research. In Handbook of applied social research methods, edited by L Bickman and DJ Rog. Thousand Oaks, CA: Sage Publications: 127-56.

Steckler, A., McLeroy, K., Goodman, R., Bird, S., McCormick, L. Toward integrating qualitative and quantitative methods: an introduction. Health Education Quarterly;191-8. Taylor-Powell, E., Rossing, B., Geran, J. Evaluating collaboratives: reaching the potential.

Madison, Wisconsin: University of Wisconsin Cooperative Extension. A framework for assessing the effectiveness of disease and injury prevention. Morbidity and Mortality Weekly Report: Recommendations and Reports Series 1992;41 (RR-3 (March 27, 1992):1-13. Torres, R., Preskill, H., Piontek, M., (1996). Evaluation strategies for communicating and reporting: enhancing learning in organizations. Thousand Oaks, CA: Sage Publications. Research methods knowledge base, vol.

United Way of America. Measuring program outcomes: a practical approach.

Alexandria, VA: United Way of America, 1996. General Accounting Office. Case study evaluations. Washington, DC: U.S. General Accounting Office, 1990.

General Accounting Office. Designing evaluations. Washington, DC: U.S. General Accounting Office, 1991. General Accounting Office.

Managing for results: measuring program results that are under limited federal control. Washington, DC: 1998. General Accounting Office. Prospective evaluation methods: the prosepctive evaluation synthesis. Washington, DC: U.S. General Accounting Office, 1990.

General Accounting Office. The evaluation synthesis. Washington, DC: U.S. General Accounting Office, 1992. General Accounting Office.

Using statistical sampling. Washington, DC: U.S. General Accounting Office, 1992. Wandersman, A., Morrissey, E., Davino, K., Seybolt, D., Crusto, C., et al. Adobe acrobat pro despeckle me movie. Comprehensive quality programming and accountability: eight essential strategies for implementing successful prevention programs. Journal of Primary Prevention 1998;19(1):3-30.

Weiss, C. (1995). Nothing as practical as a good theory: exploring theory-based evaluation for comprehensive community initiatives for families and children. In New Approaches to Evaluating Community Initiatives, edited by Connell, J. Kubisch, A. Schorr, L. New York, NY, NY: Aspin Institute. Weiss, C. (1998). Have we learned anything new about the use of evaluation?

American Journal of Evaluation;19(1):21-33. How can theory-based evaluation make greater headway? Evaluation Review 1997;21(4):501-24. Kellogg Foundation.

Foundation Evaluation Handbook. Battle Creek, MI: W.K. Kellogg Foundation. Wong-Reiger, D.,& David, L. Using program logic models to plan and evaluate education and prevention programs. In Evaluation Methods Sourcebook II, edited by Love.

Ottawa, Ontario: Canadian Evaluation Society. Wholey, S., Hatry, P., & Newcomer, E. Handbook of Practical Program Evaluation.

Jossey-Bass, 2010. This book serves as a comprehensive guide to the evaluation process and its practical applications for sponsors, program managers, and evaluators. Yarbrough, B., Lyn, M., Shulha, H., Rodney K., & Caruthers, A. (2011). The Program Evaluation Standards: A Guide for Evalualtors and Evaluation Users Third Edition. Sage Publications. Case study research: design and methods. Newbury Park, CA: Sage Publications.